Crawly

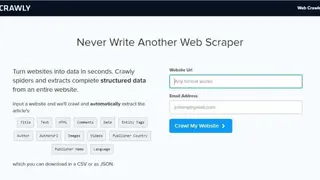

Crawly allows developers to turn any of the website content into useful data. Developers need to mention the website from which they want the data to be scrapped, and the solution also requires an email address to send the scrapped data directly to the developers’ inbox. It crawls into the website and starts scrapping the content that is present on the site and forms it into structured data.

The solution enables developers to extract data according to their wishes, such as they can extract title or text-only, or they can extract all the numbers, comments, or images from it. Developers can choose what they want the solution to scrap from the website, and they can select all the options for it.

Once all the information is put together on the solution. The developers just need to click the button which says Crawl My Website and relax. The solution scraps all the data and converts into a CSV file and sends it to the email, that developers can download.

Crawly Alternatives

#1 Spider Free

Spider Free helps developers to turn any website content into structured data that they can use for any purpose. The solution does not demand any coding skills and enables users to scrape data from the websites which they are just casually browsing.

Users can start the program by opening a browser, searching the websites, and starting the extension by clicking on it. The solution allows developers to use it both as multiple selection tools for data scrapping and a single selection tool. Users can view what they are scrapping by expanding the columns on the website, which has complete detail about it.

Spider Free allows users to save everything directly on the website and they can add or delete columns in real-time while adding the content which they want to scrape. Developers can download the content in CSV or JSON format.

#2 Reader API for DataStack

Reader API for DataStack is a tool that helps users to scrape and parse websites with a single endpoint. The solution allows users to not only scrape data from a static website but also dynamically loaded websites with the same ease. It enables users to get all kinds of content from the sites, and they, on the other hand, can select the type of content which they want.

Reader API for DataStack allows users to get all the content in a structured format which enables them to understand better. The Reader API enables users to get into any website orb any HTML file to read the content and get it for the users. Reader API for DataStack lets the users download the scrapped content in any format, such as CSV or JSON. Users with no coding or developing knowledge can use this solution without any worry.

#3 Magic Bookmarklet

Magic Bookmarklet is a tool that turns webs pages into structured data tables with a single click. It comes with Magic’s patented extraction algorithms to automatically turn web pages into structured data tables and then allows users to download the static data as a CSV file.

Users can also integrate the extracted data into other platforms by using their APIs. The solution enables users to drag the link from which they want the data, and it will browse the web for the data which they can download it afterward.

It enables users to scale their scrapping capabilities to any level and can get documents in any format of the data. Magic Bookmarklet checks the accuracy of data and detects changes if there is an anomaly. Users can add their extension to their browsers to start using it with a single click.

#4 Web Scraper

Web Scraper is a tool that makes web scrapping more easy and accessible to users as they can simply start scrapping with a single click and pointing tool. It requires no coding, and everything in it is kept simple to help users understand it and use it efficiently.

The solution allows users to extract data from all kinds of static and dynamic websites. Users can use it to navigate a website on all levels, such as categories and subcategories or pagination. The solution is built for the modern web and comes with complete JavaScript execution and pagination handlers.

Web Scraper allows users to build site maps to enable them to tailor data their data extraction methods. Users can download all of their extracted data in CSV or JSON file formats or can export it directly to the DropBox. The solution enables users to schedule their scrapping function on any website.

#5 Online Web Scraper

Online Web Scraper is a cloud-hosted web scraper tool that enables users to scrape data from the web no matter how it is stored there. Users can define which sites and which section of the site they want to scrape, and it also comes with a schedule option to do it. The developers can scrape online files and can set how they want their data to be saved.

The tool enables users to scrape any kind of data, such as snapshots, text, HTML files, URLs, anything through it. It offers web page pagination and allows users to have multiple click functionality on a single web page.

Online Web Scraper allows users to save their data in different formats such as CSV, JSON, or PDF files. Users can download different types of data and content in a single attempt from a single website instead of visiting it, again and again, to download every different type of data separately.

#6 Grabbly

Grabbly is a solution that offers the power of data from the web into the hands of users. The solution comes with an advanced Artificial Intelligence technology that helps in detecting the important data points on any page for the users. It crawls through the whole website in search of the data and extracts everything from it.

The solution allows users to schedule the extracting process anytime they want. Developers can download images from any website locally directly to their download folder. It goes into deep extraction, such as it extracts data from links given on the website from which it is extracting data.

Grabbly comes with secure extraction of data and offers secure transferring of it through HTTPS. It helps users in making data-driven decisions through its structured data and allows users to get a better lead generation. Businesses can use this solution to get a competitor analysis to stay ahead in the game.

#7 Sitebulb

Sitebulb is an advanced website crawling and auditing tool that not only acts as a website crawler but also analyzes data from an SEO perspective. The SEO feature enables users to deliver actionable website audits to their clients, and it guides users throughout their auditing journey. It lets the users crawl any website anytime with a JavaScript rendering technology.

Users can set up their prioritization of data through the help of SEO and can automatically check the progress of it throughout the time. The tool provides recommendations to users with in-depth explanations for every issue to help them understand the whole process.

Sitebulb generates a list of common optimization checks and presents it to users as context-specific hints after the audit. Users can get a detailed history of the changes that have happened on a website so far from their last audit. Sitebulb allows users to get a perfect PDF report about everything.

#8 Spider Pro

Spider Pro is a tool that allows users to scrape the website and get the content out of it in structure form. The solution comes with a clean and intuitive design and offers powerful selectors that enable users to get all the content out of the website from text to images and URLs.

It does not require any technical expertise to use it and comes at a low price. Developers just have to pay once and can get unlimited scrapes and download. The tool comes as an extension which means nothing is stored anywhere and everything is available on the computer to keep scrapping secured.

Developers can scrape any password-protected data right through its browser extension. It offers complete documentation to enable users to understand how it works and how they can use it more efficiently. The solution allows users to crawl multiple pages and download the scraped data into an SCV file to use it anywhere as they like.

#9 AnyPicker

AnyPicker is a visual web scraper tool that allows users to extract web data without any code. The solution enables users to set up the web extraction rules, and they can add the content which they want to extract. It connects the tool and scrapping content with the Google sheets, where everything is sent for further processing.

The tool offers complete privacy to developers, and users can protect their data through a password. Even if the website has an anti-scraping technology, it bypasses it and does the work for its users. It allows users to set up extraction rules easily, and they can scrape content an unlimited number of times.

AnyPicker sends all the data to Google Drive directly to keep it safe, and this process also saves time for the users. There is no limit to how many pages users are scrapping at a single time, and users can export data in CSV file format.

#10 Kimono

Kimono offers world-leading software for data-driven decisions and operations. It helps institutions to get the data to make decisions for stability and prosperity. The solution provides software that allows organizations to integrate their data and decisions into a single platform. The software helps organizations to answer complex questions by bringing the right data to the right people.

The solution helps users to work closely and meaningfully with the data, and it removes the barriers between the back-end data management and front-end data analysis. It enables users branching of code and offers data formats to interoperate with an organization’s entire data ecosystem.

Kimono fuses the data into a human-centric model, and it maps the data into defined-objects such as people, places, and events. It covers tracks and logs the actions of users when they engage with the data. The solution allows users to search all the data sources at a single time from unknown surface connections to divergent hypotheses.

#11 The Atlantic

The Atlantic is a digital magazine where you can read news, stories, and articles about the latest trend around the world. The site cover almost all kind of topics includes health, lifestyle, fashion, and politics, etc. to make it one of the best online magazine for everyone.

With this, you can also be able to read eBooks of all your favorite authors that make it more interesting. The interface of the site is quite easy to explore and offers multiple sections includes popular, latest and events, etc. Like similar sites, it also provides various categories, and each category has its content and stories to read and share.

There is also has a feature that allows you to write your own stories and share with others. To write and share stories, you need to sign up with the name, email address, and other required things. After successful login, you can easily access all features.

#12 Linkclump

Linkclump is a tool related to productivity that allows users to speed up their analysis and capturing of links found on the web. It acts as a bridging tool that takes all the links and web domains from a page and pastes it into users’ CRM or spreadsheets.

Moreover, it enables users to open, copy, or bookmark any number of links at a single time. Users can use its drag box feature, which enables them to drag it over a page and open new tabs or as many links as they want. Moreover, users can use its smart select feature that allows them to select essential links on the page while ignoring others.

Linkclump comes with an auto-scroll feature that allows users to select all links at once. Lastly, it enables users to exclude links with specific words in the settings and can delay the opening or closing of tabs.