Google Cloud Container Builder

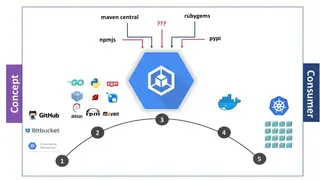

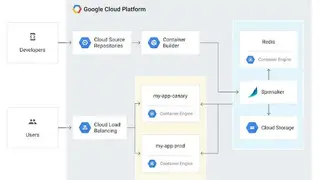

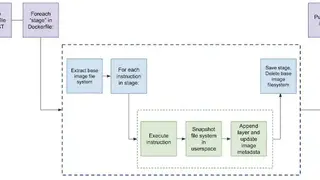

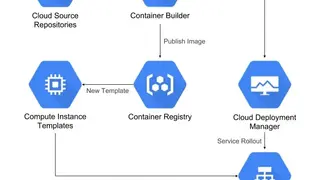

Container Builder on Cloud Platform (GCP) enables you to quickly and easily build your Docker containers. Container Builder integrates with other GCP services, such as Kubernetes Engine, Cloud Storage, and Cloud Datastore, so you can easily create and manage your containerized applications. It’s a fully managed service that makes it easy to orchestrate and automate the build process, so you can focus on developing your applications.

You can use it to manage all your builds, including creating and managing sources, builds, and artifacts. Container Builder integrates with other GCP services, such as Cloud Pub/Sub and Stackdriver Logging, so you can easily build, test, and deploy your containers. You can use Container Builder to easily create and manage builds for your applications, whether they’re clustered applications with multiple services, microservices, or just a few containers.

Google Cloud Container Builder Alternatives

#1 Argo

Argo is an all-in-one open-source suite of tools that are intended for Kubernetes, allowing to run workflows, manage clusters, and handle GitOps. Its goal is to make it easier for everyone to adopt Kubernetes and contribute to its growth. With Argo, you can use Kubernetes to manage your entire infrastructure, making it simpler and more reliable. Argo is released under the Apache 2.0 license, making it free for anyone to use. It provides a GitOps methodology that uses version control as the source of truth for management and operations. This means that all changes to the system are tracked and can be easily reverted if necessary. It benefits you with the event-based dependency management, advanced deployment strategies, declarative continuous delivery, and a native workflow engine for Kubernetes.

#2 Data Fabric

Data Fabric by Tervela is the ultimate solution to capture, share, and distribute enormous amounts of data from enterprises and cloud sources and various downstream applications and infrastructures. With it, you can easily manage your data while ensuring its security and privacy. Data Fabric helps you manage and orchestrate data-driven workflows across your organization, making it easy to get the most out of your data. With this, you can quickly and easily move data where it needs to go when it needs to go there.

With it, you can: quickly access your data from anywhere, easily share data with anyone, inside or outside your organization, monitor data in real-time, and respond quickly to changes. It enables data-driven enterprises to make better decisions in real-time by delivering data where it’s needed- when it’s needed- with the performance, reliability, and security required for today’s data-driven world. Data Fabric is widely known for its use cases like fraud detection, security management, sales & marketing management, governance & compliance management, and more to add.

#3 Xplenty

Xplenty is a data warehouse integration platform, providing accessibility of data to everyone. This data warehouse integration platform makes it easy for businesses of all sizes to collect, process, and analyze data. The platform is easy to use, and it’s scalable so you can grow your business. It is committed to making data accessible to everyone and offers a platform that helps businesses of all sizes thrive.

With Xplenty, you can easily and quickly connect to any data source and load it into your data warehouse for analysis. It supports a variety of data formats and connectors, so you can connect to any data source, regardless of its location. It makes it easy to load data into your data warehouse so you can start analyzing it quickly. Its simple drag-and-drop interface makes it easy for you to quickly connect to your data and get started. Xplenty provides you with the peace of mind to build a complete view of your potential customers, make data-driven decisions, and get better operational insights and solutions that can provide real-time growth to businesses.

#4 Pachyderm

Pachyderm is providing the data foundation for machine learning. The platform enables businesses and data scientists to easily work with massive amounts of data and run machine learning models at scale. Its mission is to make it easy for everyone to use machine learning. With it, you don’t need to be a data scientist to build machine learning models. You don’t need to have any infrastructure or coding experience. You just need to be able to point and click.

Pachyderm seems to be one of the leading platform in data versioning and pipelines for Mlops that accounts for data-driven automation, petabytes scalability, and end-to-end reproducibility. It makes it easy for data scientists to work with large data sets, so they can train and deploy models quickly. There are multiple features on offer that include automation data versioning, data-driven pipelines, console, immutable data lineage, enterprise administration, notebooks for easy data interaction, and more to add.

#5 Harbor

Harbor is an enterprise-class registry server that enables organizations to securely store and share their applications and services. It is fully open-source and integrates with major container orchestration platforms, such as Kubernetes and Docker. The platform is all about delivering the right compliance, performance, and more importunity, delivering the required interoperability to aid you in continuous and secure management of art crafts across native platforms. Its main features are security and vulnerability analysis, content signing and validation, multi-tenant management, web UI, extensible API, identity integration, role-based access control, and more to add.

#6 Docker Swarm

Docker Swarm is a platform that provides a container orchestration tool to users to manage their multiple containers deployed across multiple host machines. The platform offers a high level of availability to users. It comes with several nodes that help users handle the resources efficiently and ensure that the cluster operates effectively. It comes with two types of services: replicated services and global services.

The replicated services specify the number of replica tasks for the swarm manager to assign to available nodes and the global services function by using the swarm manager to schedule one task to each available node that meets the service constraints. It handles any specialization at runtime, and users can deploy both kinds of its nodes.

Docker Swarm allows users to declare a number of tasks they want to run, and they can scale it up or down according to their use. Moreover, it enables users to constantly monitor the cluster state and reconcile any differences between the actual state and users who can express the desired state.

#7 Airflow

Airflow is an open-source workflow management software that enables companies to schedule and monitor workflows. It uses a message queue to orchestrate the number of workers and has a modular architecture that offers infinite scalability. Airflow allows users to define their operators, which suit their environment.

The platform offers pure Python, which enables users to create their workflows from date and time formats to scheduling tasks. It comes with a friendly user interface that allows users to monitor and manage their workflows by using its web app. Airflow offers users insights into the status of all their completed and on-going tasks through its dashboard.

Airflow allows users to use it for transferring data or managing their infrastructure and does not limit the scope of the user’s data pipelines. It has integrations with various platforms such as Google Cloud, Microsoft Azure, AWS, etc. and it is a free platform.

#8 Meteor

Meteor is an open-source programming framework platform for web, mobile, and desktop applications that assist developers in doing programming effectively. The software has all the programming tools in the bank with multiple programming languages for the active run of the applications.

The software comes with the deep integration that permits you to use popular frameworks and tools efficiently. Meteor is making its mark with the creation of such applications that can run on any device, including Android, MACs, and windows. It allows you to make shipping javascript applications that are efficient, simple, and scalable.

Now you can ship more with less code that integrated JavaScript stack, and it is facilitating enterprises to grow and enhance productivity with advanced technologies. Meteor is assisting you to build applications based on java stack in no time with less complexity. The software is now helping developers with solutions for hosts and partners.

#9 Kubeflow

Kubeflow is a free to use and open-source machine learning platform that allows you to take a statistical approach to the data analytics. The software specially designed for deploying, orchestrating, developing, running scalable, and portable machine learning workloads via Kubernetes-native. Kubeflow is a straightforward way to deploy the best-of-breed open-source system for ML to diverse infrastructure, and if you are used too with Kubernetes, then you should be able to run Kubeflow.

The software allows you to customize notebook deployment and to compute resources that your data science needs. Kubeflow is surfacing multiple features that are custom tensor flow training to train ML models, tensor flow serving to export trained models, pipelines for the agile and reliable experimentation, various serverless frameworks, and more to follow. The software is featuring the Kubeflow pipelines that are the most practical way to manage and deploy end-to-end ML workflow.