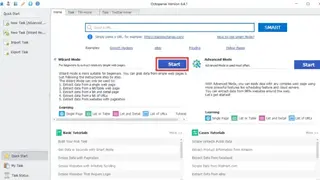

Octoparse

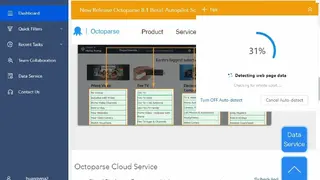

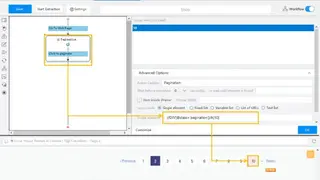

Octoparse is an extremely powerful data extraction tool that has optimized and pushed our data scraping efforts to the next level. It accelerates the extraction of content from a given website, including page elements such as links, text, images, and more. It handles large web crawls efficiently and accurately, extracting all the data we need in minutes instead of hours or days. With Octoparse, you can reach out to suppliers who never had time before and now save your valuable time and money spent on manual research for new leads.

Its diverse, high-quality extraction engine allows you to extract all the content you need and save billions of dollars in both time and money. Octoparse is made for automating content extraction on websites, which can be used to automate web crawling, extracting content from millions of web pages within minutes. All in all, Octoparse is a great tool that you can consider among its alternatives.

Octoparse Alternatives

#1 Diggernaut

Diggernaut is a software that is created specifically for web scraping, data extraction, and other ETL tasks. By utilizing this tool in conjunction with modern-day developer tools like coding languages, i.e., Python or JavaScript, developers can now accomplish scraping tasks much more quickly than they ever could before due to the popularity of cloud computing.

The basic idea of the tool is that it collects user-submitted links from various sources. These links enable developers to accurately scrape information from web pages and dump them into different databases like MySQL databases, text files, or HTML pages. Another great feature of this tool is the OCR scraping that you can use with your digger to extract text from images. All in all, Diggernaut is a great tool that you can consider among its alternatives.

#2 Extracty

Extracty is a web scraping service for developers who need to extract data from the web. With this tool, you can create dynamic scrapers in minutes and get clean JSON output. It has everything you need for your scraping project, including all kinds of features, optional rulesets, powerful extensions, logs, and automatic performance reports. Also included are community-created scrapers that allow you to reuse other people’s work whenever possible.

To scrape the website elements, simply select the elements you want to extract, and it will generate a matching section for you. You can update the code generated for adding custom logic and change the output format. Leave the hassle of scaling, security, or infrastructure; just deploy the endpoints and see the process. All in all, Extracty is a great tool that you can consider among its alternatives.

#3 eScraper

eScraper is an eCommerce data scraping tool that collects data from multiple sites and prepares a relevant .csv or excel file with all product info for your stores, whether its, PrestaShop, Magento, WooCommerce, or Shopify store. As soon as you enter the code of the website you want to scrape, it will read the page automatically and parse out data into .csv or excel without requiring any coding skills.

The software is highly customizable and includes a variety of advanced features. With the search tool, you can specify the data you want to get from the site. You can manually add many different attributes or use smart filters to create more specific search queries.

Another notable feature is the Smart order; if your scraper fetches product info by page, eScraper will automatically recognize and save each product in the shopping cart for you. If needed, the tool will also automatically recognize and display related products and variations of products. All in all, eScraper is a great tool that you can consider among its alternatives.

#4 Artoo.js

Artoo.js is a small data scraping tool in the form of JavaScript code that is meant to be run in your console browser and offers scraping abilities. It offers a wrapper around the HTML Agility Pack, a powerful library for parsing and manipulating the DOM without jQuery or other libraries. The tool makes it easy to parse through a page and acts as the glue between the HTML Agility Pack and the rest of your code. The included methods can be used to quickly create scrapers for any website that uses semantic markup, while the utilities used in making Artoo.js make it easier to write robust queries and filter content in general.

With its features like Spiders, Sniffers, jQuery, etc., you can crawl pages through Ajax and retrieve accumulated data, can hook on XHR requests to retrieve circulating data with a variety of tools, or inject the jQuery into Artoo in the web pages that you want to scrape data from. All in all, Artoo.js is a great tool that you can consider among its alternatives.

#5 ProxyCrawl

ProxyCrawl is an all-in-one scraping and data crawling tool that is meant for business developers. The tool can also be used by webmasters, SEO specialists, data scientists, researchers, journalists, etc. With this tool, you can import Web pages, do website archive scraping and use various proxy types. The tool supports 50+ proxies and 20+ languages, including English, German, Spanish, and some others. This allows users to quickly create a project for data mining/scraping from a large number of sources with a minimum of effort.

The tool has a convenient user interface that supports keyboard shortcuts for fast navigation between the application options. ProxyCrawl is easy to install and does not require additional libraries or frameworks. Moreover, you can track and monitor your crawling activity in real-time through the live monitoring page from your account’s dashboard. You can also utilize the Crawler APIs to quickly check stats and manage your crawls efficiently. All in all, ProxyCrawl is a great tool that you can consider among its alternatives.

#6 Webhose.io

Webhose.io, now named Webz.io, is a platform that lets you quickly locate large-scale structured data from the web such as news, blogs, online discussions, and even dark websites all in one place. This repository helps to make your research both easier and more efficient. And it does this by giving users full access to raw information that can be filtered and sorted according to specific criteria, including keywords or phrases.

The application’s user interface helps to make searching for pertinent information more efficient. It comes with a user-friendly search bar that allows users to type in keywords or phrases they are looking for. Once you have found what you are looking for, you can then narrow down your results using filters that are linked to specific social media sites. All in all, Webz.io is a great tool that you can consider among its alternatives.

#7 TheWebMiner

TheWebMiner is a data scraping company providing datasets of valuable information in a usable format for online market customers. The tool offers data for e-commerce and internet marketing campaigns as well as publically accessible websites such as Internet indexes, hotel booking suppliers, and shopping websites. They offer data such as Geographic Location, Language, Domain, Onsite Search Terms, etc. This unique service enables you, clients, to receive what you want in the format of choice.

It not only scrapes data from search engines but scrapes data from databases too. The benefit of this is that online market website owners enjoy the benefits of being able to have an alternative option of making their website more competitive against other online competitors. Online business owners are benefiting by being able to have usage statistics, which were not available previously, without having to pay an external vendor.

TheWebMiner allows for access to information that was previously inaccessible through traditional website usage statistics, such as registering users, current website visitors and recommending new content to users with personalized recommendations based on previous usage patterns. All in all, TheWebMiner is a great tool that you can consider among its alternatives.

#8 Hyscore.io

Hyscore.io offers a solution to the wide-reaching problem of ad fraud and poor targeting by tracking people’s devices, locations, and interests to ensure that publishers and advertisers only show their advertisements to the most relevant audience. The service is offered as a brand-safe platform for anyone who wants an effective advertising experience on any website or app on any device.

Generate higher monetization with contextual segments 100% cookie and consent-free. You are helping publishers by indexing your site for a wider contextual programmatic advertising market. With simple keyword matching, you can go beyond one-dimensional IAB categorization. This way, you can analyze which content works best in multiple environments. All in all, Hyscore.io is a great tool that you can consider among its alternatives.

#9 Simplescraper

Simplescraper is a simple to use data scraping extension that doesn’t require any coding to scrape data in the cloud. You can create API in seconds. If you deal with data or work in content marketing, chances are at one point you’ll need to scrape a website, i.e., automatically extract information and copy it into a spreadsheet. With this tool, you can rapidly extract information from HTML pages in your browser or via API. This extension allows you to scrape any website on the fly without firing up your browser’s dev tools or writing code.

You can scrape any site with thousands of pages of data into a spreadsheet or database without affecting the performance of the website itself. Other features include multiple scraping tasks simultaneously, sending data automatically to google sheets, extracting links and data behind every link, etc. All in all, Simplescraper is a great tool that you can consider among its alternatives.

#10 80legs

80legs is a web scraping tool that lets you perform web crawling with ease. If you’re looking for a crawler that can handle thousands of URLs at once and is able to do so in an efficient manner with little attention paid to load time, this is the place for you. You can use 80legs to power web crawls as it allows you to create and run web crawls through the API without having to worry about any of the intricate details like scheduling and capacity.

80legs automatically handles everything for you with a level of efficiency and scalability that has proven difficult to achieve before. It also offers the ability to integrate the service into your own application as a separate module. All in all, 80legs is a great tool that you can consider among its alternatives.

#11 ScrapingAnt

ScrapingAnt is a web Scraping API for extracting data from websites. To use the tool, you have to integrate your website’s scraper library, and information about this library will be given to you for scraping. With this tool, there are no restrictions, so no matter how big your website is. You can choose between either extracting information about the most popular products on Amazon and scraping websites for companies that need data.

With this tool, you can overcome the competitors by researching deeply into market trends and scraping the product prices to set yours. ScrapingAnt allows you to send custom cookies to the site for scraping with both GET and POST requests, so you will be able to scrape session-related data. All in all, ScrapingAnt is a great tool that you can consider among its alternatives.

#12 ScrapingBytes

ScrapingBytes is an API that provides reliable and efficient web scraping services. It lets you scrape all websites for you, render any JavaScript you have, and employ premium proxies to ensure you have the fastest connection possible. Scrape anything from Webpages with ease; there is no signup form or usernames required, simply provide your credentials once before your first request, and then enjoy the ease of remote web scrapping with the help of the professional tool.

As long as the website is publically accessible by you, there are no restrictions. The rendered content is sent back to you in the form of HTML, CSS, etc., and includes content rendered via JavaScript. You can also parse the data any way or into any structure. All in all, ScrapingBytes is a great tool that you can consider among its alternatives.

#13 Screen Scraper

Screen Scraper is a web data extraction tool that allows users to extract data from any website according to their requirement and save it online or download it. The platform comes with the much-needed experience as it is one of the oldest platforms performing the data extraction work in the market.

It allows users to download text, images, and other content automatically, and users can extract anything with lightning speed. It delivers data in the format users can use, such as TXT, HTML, CSV, etc. Moreover, users have to tell the site and the kind of data they want to extract to the software.

Screen Scraper manages everything, and users do not have to do anything and let the data flowing. Different industries can benefit through software such as the medical sector can gather health plans from different sites with a click. Lastly, it comes with free and paid versions.

#14 Scraper API

Scraper API is an online solution that offers proxies and browsers to users to perform web scrapping without getting detected or blocked. It ensures that users will never be blocked from IP blocks during automatic web scrapping. The solution handles CAPTCHAs for the users so that their concentration is not distorted.

The platform comes with customizable features that allow users to customize request headers, IP geolocation, and much more. Moreover, it automatically discards slow proxies from its pools and guarantees users that all the proxies will be fast and reliable. The solution contains more than twenty million APIs consisting of various data centers, mobile proxies, and much more.

Scraper API offers geotargeting to more than ten countries, and users can request more countries if they want. Users can get localized information from these countries and can save the web content in any format. Lastly, it ensures a hundred percent uptime to users.

#15 Agenty

Agenty is also known as agents for machine intelligence as it offers data scraping, text extraction, change detection, and many other functions. The solution helps users to scrape data from all kinds of websites, whether they are public or password-protected. Users can use its extension feature that allows them to click and point which content they want to scrape.

The platform allows users to perform batch URL crawling that enables them to extract data from unlimited webpages. Moreover, users can schedule their web scraping agents, and they can run them anytime they want. Users can save all the crawling history and data online and can download it anytime they want.

Agenty comes with a change detection agent that alerts users whenever there comes a change in any website which has user’s interest. Moreover, it offers a sentiment analysis feature that allows users to extract reviews and to analyze them whether they are positive or negative.

#16 WebHose

WebHose is a platform that helps users in turning unstructured web content into readable data for the users. The platform enables users to monitor and analyze media outlets in all languages, such as reading and extracting news. Moreover, users can stay-up-to-date and keep up with the conversation on message boards and forums.

The platform also allows users to get comprehensive coverage of web data sets across content domains, such as tracking updates across the blogosphere. It allows users to get access to the customer’s voice wherever they are. Users can also use this platform to uncover any kind of cyber threat over the network and helps in identifying data breaches in the system.

WebHose helps financial companies to make data-driven investment decisions and allows the companies to perform effective market research. Lastly, users can also use high-quality data sets to train their artificial intelligence.

#17 Content Grabber

Content Grabber is a platform that helps users to reliability extract any data from any website to create their data source. It comes with software that offers enterprise web data extraction solutions known as CG Enterprise and it is also a cheap solution. Moreover, it offers two types of licenses to users, i.e., one for the desktop and one for the server.

The software comes with guaranteed reliability and scalability that allows users to get the best web data quality. Moreover, users can add extraction functionality to their browsers by using the built-in API for easy access to extract data.

Content Grabber offers no restriction on the number of pages and data, and users can extract as much as they want. Moreover, it comes with a centralized management system that helps in managing and monitoring data extraction operations. Lastly, it offers 24/77 support to users and data security.

#18 Connotate

Connotate is a solution for users who want to extract data from the web. The platform offers users a web application to extract data from the website directly. The solution requires no coding expertise or custom skills; however, users can ask the platform’s managed services section to get the data for them.

The platform offers completeness, i.e., it covers all websites and all document formats, and is scalable to billions of pages. It provides everything for the users from specification to deployment and from issues resolution to on-time delivery. Moreover, it performs human-like browsing, keeps the browsing history saved, and solves CAPTCHA automatically.

Connotate offers complete accuracy as it comes with ML-based anomaly detection that allows users to detect any kind of failure and abnormal values. Moreover, it provides users with QA workflows to ensure that only high-quality data reaches users. Lastly, it offers a data operation center for users to control all the data.

#19 ScrapeStorm

ScrapeStorm is a robust platform that helps users to extract data from websites without any code. The platform comes with a smart mode, which is based on artificial intelligence algorithms and helps in identifying list data and tabular data without any set of rules. It also automatically recognizes forms, links, images, prices, and email addresses.

The platform allows users to use the flowchart mode for extracting data to browse webpages manually and generate complex scraping rules in a few steps. Moreover, it comes with simulation operations that allow users to click or move the mouse and evaluate conditions.

ScrapeStorm offers multiple data export methods to users to keep the extracted data saved in their system, such as Excel, CSV, etc. It has powerful scraping and high scrapping efficiency to meet the needs of both individuals and enterprises. Lastly, users can save all of their tasks on the cloud server and can access it anytime.

#20 Extract Anywhere

Extract Anywhere is a platform that allows users to extract web data with a powerful script builder that helps users in building their own extraction rules. The software comes with an intuitive point and clicks interface, which allows users to extract data from any online website and HTML document. Users can use this tool to build their database in minutes.

The Management-Ware Extract Anywhere allows users to extract any data and save it in their format of choice such as Excel, CSV, etc. It helps users to scrape various types of data and organize the extracted into different information data sets, and they have full control over their script.

Extract Anywhere allows users to navigate web pages, and users can use their mouse to scrape any data from the web page. Lastly, it allows users to harvest data which is undetected and helps users to hide their IP address while they are extracting data.

#21 Ubot Studio

Ubot Studio is a platform that allows users to automate their common and daily tasks for internet marketing potential. Users can automate anything from what they do online on the internet. The solution is compatible with almost every website and helps users in collecting and analyzing information.

It enables users to download and upload any amount of data, finish any job they want on any website, or synchronize online accounts. The platform comes with a simple drag and drop interface that works with users to make their work-friendly. It reads data both from the websites and users’ saved files to understand complex data and its working with tables.

Ubot Studio allows users to build drag-and-drop automation products using Visual Script language through it. Users can record actions in the browser and can convert them into scripts. Lastly, users can send, receive emails daily, and can download the links inside them automatically.

#22 FMiner

FMiner is a tool that comes with powerful and user-friendly web scraping and data extracting features. The software comes with a visual design tool that makes the data mining project a breeze. The platform requires no coding, and users can start using it right after installing it. Moreover, it allows users to drill through the site pages through the combination of link structures.

The software offers multi-level nested extractions that help users in linking structures to capture directory content and product catalog. Moreover, it comes with a multi-browser crawling capability, which increases the pace of data extraction.

FMiner enables users to export data in different formats such as Excel, CSV, HTML, and can also export data to popular databases such as MS SQL, or Oracle. Lastly, it allows users to scrape dynamic pages in the context of static pages, and users can receive an email report when the process completes.

#23 Scrape.it

Scrape.it is a point-click tool that allows users to perform web scraping, crawling, and data extraction services. The platform requires no programming, and it generates Web Scrapping Language, which saves time in coding custom scripts.

Moreover, users just have to express any web scrape in a sentence, and WSL performs all the tasks. The platform offers robot servers that come with unique IPs and which users can run on their browsers. It helps in automating websites, including legacy JavaScript applications, with URLs that do not change.

All the data from the web crawls are stored online, and users do not have to maintain any kind of database or server. Scrape.it comes with a browser extension, and users can crawl any website they want without leaving the page by just clicking the extension button. Lastly, it offers a 30-day free trial and offers email support to its users.

#24 CoffeeScript

CoffeeScript is a programming language that compiles into JavaScript to enhance its readability and brevity. The platform offers better syntax and avoids all the quirky parts of JavaScript and enhances the beauty of the language. Moreover, users can use the existing JavaScript library to reduce interpretation time. The platform has compatibility with the modern JavaScript features, and it runs natively in Node 7.6 plus.

Users must ensure that they have a stable working version of Node.js to install CoffeeScript on their system. Moreover, it comes with different commands which perform various functions such as AST command generates an abstract syntax tree of nodes. Lastly, the functions of CoffeeScript are defined in an optional list of parameters and the function body, and like all other languages, it supports strings.

#25 Diffbot

Diffbot is a platform that allows users to transform their web into data and helps in extracting data and saving it in different formats. The platform uses machine learning that allows users to transform the internet into accessible and structured data.

It allows users to get any kind of data from the web without any trouble and expenses. The platform analyzes the web pages like a human and extracts the relevant data that users require. Users can use its API, which crawls all over the platform and find products that users asked, such as articles or videos.

Diffbot comes with a crawling bot that extracts data from entire sites irrespective of the fact what users want. However, users can use its structured feature to find articles on sites according to the required context. Lastly, it provides a relationship graph to users to let them understand how web items are related.

#26 Import.io

Import.io is an easy-to-use web-based scraping tool that you can use for grabbing data from websites and analyzing it in Excel. The interface is designed to be straightforward and accessible, which makes it super simple to use to quickly grab information from an unstructured website. The tool also lets you define what elements you want to scrape and save them in a spreadsheet so you can analyze the data later on at your convenience. You can start importing data from websites by entering the URL of the site you want to scrape into the text field at the top of the screen.

Import.io looks through each page on your target website while it is being scraped and extracts all of the elements it can find. The web page is then saved in a CSV file, which you can open in Excel for analysis if you wish. If you are not sure what elements to scrape, there are some built-in suggestions that will give you an idea about what to scrape from your website, or you can configure them manually with one click if there is information on the page that is particularly important to capture.

The other primary feature of import.io is the ability to export your data in a variety of formats, including CSV, Excel, XML, and R. All in all, you can take advantage of import.io to scrape data from your website if you are interested in exploring it for analysis or even just doing some quick data mining on the data at your convenience.

#27 Data Scraping

Data Scraping is a tool that powers your intelligence business decision with real-time data. Web scraping solution for SMBs and Enterprises in the cloud, leverage the structured data from on-demand and scheduled scraper to fuel data to your business. Data Scraping can be deployed on-demand or scheduled-scraper to crawl our website and extract structured records in your desired format such as CSV, XLS, JSON, or XML.

It makes it easy to automatically extract data from web pages and gather information, such as product price and sales rank, from online retailer sites. The extracted structured data can then be used to generate reports in your desired format.

Data Scraping can also extract information from specific sections on a webpage, extract embedded data or parse meta-data tags to extract specific information. It can be used in healthcare applications or in web scraping to extract patients’ health records. By integrating Data Scraping with other tools, you can create intelligent solutions by yourself in no time. All in all, Data Scraping is a great tool that you can consider among its alternatives.

#28 MainRest

MainREST is a web-scraping framework with API access. The goal is to make data extraction easy and quick. It’s specially designed for extracting data from RESTful APIs, though it can be used for other purposes as well. The library works by having the user declare the path, query-string parameters, and post body that are required for an API request, then feed that into the library’s method call to extract information from the URL specified in these instructions.

It has both synchronous and asynchronous modes of operation but always returns a Promise object when making synchronous requests in order to provide more control over what happens when scraping errors occur or particular results are not found. It minimizes calls to remote APIs and thus allows the scraping of multiple layers of authentication by varying the request parameters and post body. All in all, ScrapingBytes is a great tool that you can consider among its alternatives.