WebZip

WebZip is an offline browser for downloading the entire website. After downloading a website, the users can then open that downloaded website in an offline mode and access all its content, including sounds, images, and various media files. The website downloaded by this tool can be saved to HTML format. A new tool FAR integrated into it that will make you able to compile downloaded content to HTML-help.

The FAR system provides the option to save the captured website content into a single compressed ZIP file. Some of the key features of WebZip are that it captures the entire website or that information that you really want, save time and money by not paying too much internet fees, ZIP up the web, browse the offline websites from anywhere, and anytime, and much more. The simple interface of WebZip delivers the best navigation option to its users.

WebZip Alternatives

#1 Cyotek WebCopy

Cyotek WebCopy is a free program for copying either a full or partial website and saving it to your local PC to view it in offline mode. Once installed, it will scan the provided website and download all of its data into your hard drive. Once you run this tool, it will grab all the linked resources of that website, like its pages, images, videos, files, files downloads, and almost all those things that are part of it.

At the same time, there are some limitations of this tool as well. It, by default, doesn’t include any JavaScript or virtual DOM parsing. If you want to copy a website that relies heavily on JavaScript, then you will be disappointed in that case. Moreover, it is not capable of downloading the raw source code of a site. It can only download what the HTTP server returns. Some of the main features of the Cyotek WebCopy are set on rules to control the scanning process, forms & passwords system, viewing links bother internal and externals, highly configurable, reports viewing system, regular expressions of the built-in editor, and website diagram.

#2 HTTrack

HTTrack is the name of a free offline browser that enables you to download an entire website from the internet to a local personal PC. It is merely an easy and user-friendly offline browser utility that will make the process of downloading sites to a local PC easier and simpler for you.

There are various other functions that you can avail of by simply having this platform, such as getting HTML, images, other files from the server, and building all directories recursively to the PC.

The way of working with HTTrack is effortless. It arranges the main website’s relative link structure. You are only required to open a page of the mirrored website in your web browser and then browse the site from link to link as you are exploring it in an online environment.

#3 GNU Wget

GNU Wget is a tool that helps to download files through HTTP, HTTPS, and FTP protocols. It is free and comprises different striking attributes, including its potential to use filename wild cards and recursively mirror directories. It can run UNIX-based operating systems and various other operating systems, including the Windows OS. It has been framed especially for slow network connections and is an incredible tool for those who are keen to get back their currently crashing downloads.

It is an incredible tool for those who want to resume aborted downloads, use filename word cards, recursively mirror directories, NSL based message files for many languages, support for both HTTP proxies and cookies, support for persistent HTTP connections, operation in the background, uses local file timestamps to determine whether documents need to be re-downloaded when mirroring.

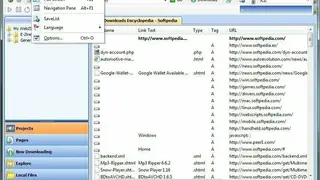

#4 Offline Explorer

Offline Explorer, also is known as the MetaProducts Systems Offline Explorer, is a valuable utility. Its user interface is one of its prominent parts while it boasts its browser, so you don’t require installing any other. Offline Explorer can download such pages that contain Java Scripts, Java Applets, Cookies, Post requests, referrers, Cascading Style Sheets, Macromedia Flash, XML/XSL files, Contents files, and MPEG3 files.

Offline Explorer is the name of an ultra-fast platform for downloading an entire website and then viewing it later in an offline mode from any local PC. It is surely of great help for webmasters and developers and even for the content writer who is required to get the data from various websites.

The good quality of this website is that it allows its users to explore each section of the site in offline mode that they have downloaded by way of Offline Explorer. Some of the main areas of functioning of the Offline Explorer are bringing new unlimited capabilities in archiving websites, capturing the even social networking websites, task-based wizards to speed up your workflow and much more dramatically.

One of the best advantages of using Offline Explorer is that it allows its users to download an entire social media website even as well. That feature is still missing in most of the website downloaders. Some of the key features of the Offline Explorer are support for downloading, support of BitTorrent, support for HTTP/SOSKS4/SOCKS5/User@Site Proxy, built-in backup system, and much more.

#5 PageNest

PageNest is a web crawler that is used to download websites completely to your system. When downloading is done, all websites can easily be transferred to other devices in standard HTML and JPEG formats. It is one of the best options for those who want to get the entire website downloaded and to access it in an offline mode with all its functionalities.

This tool’s exceptional features two options for its users either to download the whole website or download only a specific page(s). Its customization system allows the users to set the range for the pages. While downloading the website, PageNest gives its users the option of controlling multiple threads at once.

#6 BackStreet Browser

BackStreet Browser is an offline browser where you will be provided with the feature of downloading your favorite website and then exploring it in offline mode from your PC. It offers the downloaded website in a zipped format. The site allows you to browse through the site’s contents.

Everything from HTML, graphics, sound, Java applets, and other such stuff is downloaded and rendered for you to go through. Some of the key technical features and functions of the BackStreet Browser are to access password-protected websites, filters system to filter websites by size/type/date modified/text, retrieval threads system, support for proxy support & timeout, user-selectable recursion levels, option for duplicating the original directory structure of a website, print/preview the file before actually downloading it, built-in file viewer system, system to download modified and new files, download high speed and multi-threading websites and much more.

#7 NCollector Studio

NCollector Studio is a universal website crawler and offline web browser for easily downloading any website and then exploring it offline just like visiting it online. Like the online version of any website, this tool’s users can search for its specific files, images, videos, etc. This studio is a simple program providing all the fundamental functions you would need that any website mirroring application is offering.

Some of its main functionalities are offline browsing, website crawler, search providers, and mirror website. Most of the users use any website downloader or crawl software to visit an offline environment. All links to the website will be translated into local links so that you can freely enjoy offline browsing of the downloaded website. In addition to the paid version, NCollector Studio has a free version as well, but the number of functions in the free version will be minimal compared to the paid ones.

#8 Local Website Archive

Local Website Archive is a platform to archive multiple web pages or even full websites to your local system. It also allows you to store all those web pages in the form of PDF files. Moreover, it can also easily zip files, and due to this, you can share that web data with anyone you want via email to provide them access to these sites.

The best part about this platform is that it will store all those archived data in its original file format so that you do not face any trouble while accessing them. The main intuitive features and functions of the Local Website Archive are to save both websites and webpages, works with all leading web browsers, works with several online tools, archive PDF documents, powerful search engine facilities, store & reuse the information, and much more.

#9 SiteSucker

SiteSucker is the first dedicated program for Mac users to download any of their favorite websites to PC. The user-friendly interface comes with limited but necessary options that you may require during the download process. The websites downloaded by this tool can be explored in offline mode.

It copies the entire data of websites like pages, audio, videos, images, PDFs, style sheets, and much more to the local hard drive. It is just like creating a duplicated directory of the website on the local hard drive.

After installing the software, you are only required to provide the URL of your website and press the return button, and SiteSucker will start the downloading process with the download status for you to monitor. In addition to English, websites of various other languages can also be downloaded and make local copies of those websites in your system.

#10 FilePanther

FilePanther is a web crawler that will let you access all files of any website within a go. By having this application, you can explore all of the links and pages of any online website offline. This tool will first store the files of your provided website in the local system directory so that you can access all of its features in an offline mode. In addition to visiting in offline mode, you can share it with others as well. It is surely of great help for web admins and developers, and even content writers who require data from various websites.

First of all, it scans the desired website by an algorithm. This process will take some time to complete because it crawls each web page at the back end. Furthermore, users can also set priorities for scanning according to their ease. After this, a local cache file will be created in the system that will contain the downloaded files on the website during the scanning. Some of the key technical features and functions of the FilePanther are a complete scan of any website, saving the downloaded file into cache files, automatically cache file uploading & downloading system, integrated download manager, multi-language support, highly configurable, etc.

#11 UnMHT

UnMHT is an application used to view MHT or MHTML web archive format files and save complete web pages, including its text and graphics. It is a unique application that can store HTML and images, and CSS into a single file. Almost all leading web browsers can open the files downloaded by this tool. Some of the key features are to open any MHT file, availability of an information panel to get the information of ongoing process, and save a webpage as an MHT file.

Furthermore, it also offers to save a webpage as MHT with current state or original file, save a dynamic snapshot of webpage, save a static snapshot of webpage, save original files downloaded from the server, save a webpage with a single click, save the linked page, save selection, save multiple tabs, store additional files into MHT and much more. To enjoy the full features of UnMHT, you are required first to enable the JavaScript of your web browser.

#12 Mozilla Archive Format

Mozilla Archive Format is a web archiving platform available in the form of an extension for Firefox. This add-on for Mozilla Firefox is used to save a single or more web page and even an entire website. When it comes to saving any website or webpage, it means saving all associated files, including audio, video, and other related web resources, to a single file. The exceptional feature about it is that it is entirely different from the traditional MHTML based platform. Unlike MHTML, it deploys the MIME encoding system within an HTML file.

The main technical features and functions are to save data in a single file, link to the original, simple to use, highly compatible, create the best snapshot, convert the saved pages, open-source platform, store audio and video files, open by clicking the icon, compact and easy to use. It is the best extension for converting the saved pages and performs various other functions from storing to downloading. The saving system of Mozilla Archive Format will make you able to get all saved pages in the original form regardless of the file format or save command you choose.

#13 MetaProducts Inquiry

MetaProducts Inquiry is an Internet Explorer platform that will allow you to collect and organize the data of any website. If you want to store any website data permanently so that you can access it anytime after losing it, then it is a simple and user-friendly application that will let you save web information with a single click. It is not restricted to get the data only because you can even organize that data as well. It is available in two formats: a standalone application and an integration setup for Internet Explorer.

Its inquiry system is also available for the Opera, Mozilla Firefox, Safari, Netscape, and Maxthon browsers and can be accessed from the right-click context menu. Some of the best features of the MetaProducts Inquiry are user-friendly, easy to use, simple to store web pages, fast storing, instant search, keep important webpage forever, easily share collected data, available for both beginners and novice users, and much more. By using this tool, you can easily save the citation, reference, information and design your own reference styles to be stored with each document. It is a platform for printing multiple saved web pages with a single command.

#14 Darcy Ripper

Darcy Ripper is a Java-based fast, and efficient website downloader and web crawler. By using this program, you can easily download web and web-related data on the go. The current version supports the session sections like save and download. Predefined request filters are also now part of it. The saved package files on this platform are independent, which means you can share your saved files with anyone, and anywhere you like.

It is a cross-functional and universal web downloader available for almost all leading operating systems, including Mavericks and UNIX-based systems. Darcy Ripper’s features include configurability, easy job package control, independent platform, and job package statistics view. The configurability is an advanced feature for such a tool because it delivers a large number of configuration settings to its users to specify settings about the download process to get exact web resources.

#15 Fresh WebSuction

Fresh WebSuction is a free offline browser system. By using this application, you can download any website and can explore it in an offline environment. It is one of the best ways to download the content of any website like reference material, software files, ezine articles, news, online books, and much more. For those users who want to keep a permanent record of any website. You can even share the saved website with others as well.

Its features and functions make it one of the best programs to download any website. Moreover, it will grant you immediate access to your favorite websites. There is a configuration system for limiting the level of the project, the option to select which files types/extensions to be handled by this program, easy to use, add/remove any file type/file extension, convert the link, and much more.

#16 WebCopier

WebCopier is an elementary yet highly advanced level program to download any website for free. This website downloader and web crawler will save your internet and keep a permanent record of your desired website on your PC. You can access the saved website in offline mode and share it with others as well. It is a great source to explore websites, to let you analyze website structures, find the dead links and transfer the work on the other operating systems.

Being an individual, you can save complete data of your desired websites, stock quotes, magazines, and much more. The companies can also use the WebCopier to transfer its intranet contents to staff PCs/tablets/smartphones. You can create a copy of companies’ online catalogs and brochures for sales and personal use, backup corporate websites, and print the downloaded websites. In addition to the free version, there is a paid version of WebCopier that contains more advanced features and functions. You will explore the powerful and innovative features to find, manage, organize and track every piece of information over the internet.